Quantum Timelines Just Got Shorter: A Practical PQC Migration Playbook

For years, post-quantum cryptography felt like a future-program problem. Important, yes. Urgent, not yet.

That posture is getting harder to defend.

A new wave of public estimates has shifted the conversation from “prepare eventually” to “ship now.” The triggering event was a front-page Hacker News post, “A cryptography engineer’s perspective on quantum computing timelines”, which linked to Filippo Valsorda’s argument that the timeline for cryptographically relevant quantum computers may have compressed to the end of this decade.

If you run systems that depend on RSA or elliptic-curve cryptography for long-lived confidentiality and identity, this is not a thought exercise anymore. It is a migration program.

What Actually Changed

The shift did not come from one viral take. It came from multiple signals lining up.

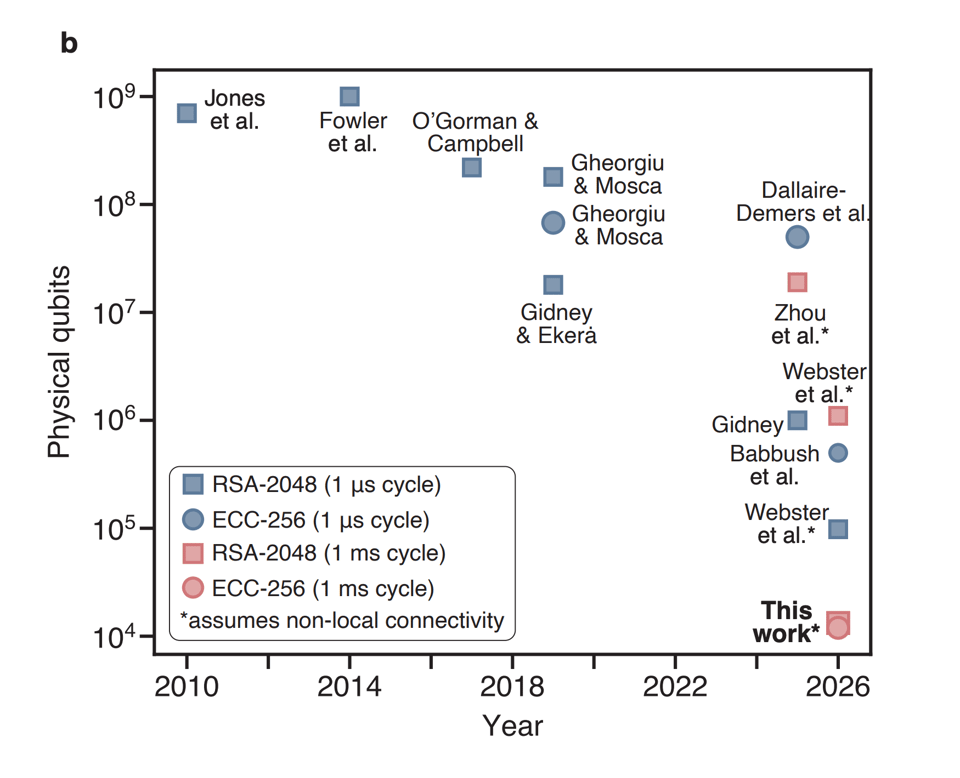

The first is Google’s March 2026 disclosure on updated quantum resource estimates for ECDLP-256, the hardness assumption behind P-256 and secp256k1. Google describes compiled circuits with under 1,500 logical qubits and tens of millions of Toffoli gates, and estimates that under certain hardware assumptions this could map to under 500,000 physical qubits in minutes. Their framing is explicit: this supports a 2029 migration target for post-quantum cryptography.

The second is independent work from Oratomic (arXiv:2603.28627), arguing that neutral-atom architectures with non-local connectivity could push cryptographically relevant Shor workloads much lower in physical qubit counts than traditional million-qubit narratives. Their abstract states that P-256 discrete logs could be a days-scale operation with 26,000 physical qubits under their assumptions.

The third is a policy and engineering shift from practitioners. Valsorda’s position moved from “roll out PQ key exchange first and take more time on signatures” to a blunt claim: if organizations want to be done in time, they should deploy what exists now, including large ML-DSA signatures in ecosystems that were designed around small ECDSA artifacts.

No single paper proves the exact year a CRQC arrives. But risk programs are not built on certainty; they are built on downside.

The Risk Model Most Teams Get Wrong

Many teams still evaluate the question this way:

“Are we sure a cryptographically relevant quantum computer exists by 2030?”

That is the wrong decision lens.

The operational question is:

“Can we afford being wrong if it exists by 2030 and we are not done migrating?”

For systems with short-lived secrets and no durable archives, that may be tolerable. For systems with long-lived encrypted data, hardware roots of trust, durable signatures, or ecosystem-wide identities, the answer is usually no.

The second mistake is treating PQ migration as a library upgrade. It is not. It is a protocol and lifecycle upgrade:

- key exchange choices

- certificate and signature formats

- wire compatibility and fallback logic

- key rotation cadence

- HSM and KMS integration

- client/server rollout sequencing

- incident response for downgrade pressure

This is a program, not a patch.

Key Exchange vs. Signatures: The Asymmetry

A useful distinction from the cryptography community is that confidentiality and authentication do not migrate with the same complexity profile.

Key exchange migration to ML-KEM is comparatively straightforward in many modern protocols. Hybrid approaches are practical, and many stacks already have implementation paths.

Authentication is harder. Signatures are embedded everywhere: X.509 cert chains, protocol handshakes, code signing workflows, package metadata, identity systems, secure boot, firmware updates, and legal records. Signature size and verification characteristics spill into every layer that assumed ECDSA-era constraints.

That is why compressed timelines are disruptive. If you thought signatures could wait until the 2030s, you had architectural breathing room. If your target is effectively 2029, you are now compressing redesign and deployment into one cycle.

NIST Standards Give You a Starting Point, Not an Endpoint

The good news is the core building blocks are no longer speculative. NIST finalized FIPS 203 in August 2024 for ML-KEM, and related PQ standards provide production-grade primitives.

But standards availability does not equal deployment readiness.

You still need to answer practical questions:

- Which trust boundaries must become PQ-first this year?

- Where can you tolerate hybrid transitional states, and for how long?

- What backwards-compatibility paths create unacceptable downgrade risk?

- Which systems can be retired instead of migrated?

Teams that skip this inventory end up with symbolic migration: a few PQ-compatible endpoints, but no coherent security posture.

A Concrete 4-Phase Migration Program

If you need a pragmatic implementation path, use this sequence.

Phase 1: Exposure Mapping (Now)

Build a cryptographic asset inventory tied to data lifetime and blast radius.

At minimum, classify:

- inbound and outbound TLS surfaces

- service-to-service mTLS

- SSH and administrative channels

- certificate authorities and issuance pipelines

- code signing and package provenance

- encrypted-at-rest artifacts with multi-year sensitivity

- hardware attestation and TEE dependencies

If your inventory cannot answer “where do we still rely on RSA/ECC for identity or confidentiality?” in one week, that is your first blocker.

Phase 2: ML-KEM Defaulting (Near-Term)

Prioritize key exchange surfaces with clear implementation support. Drive toward PQ-capable defaults, not optional toggles hidden behind feature flags nobody enables.

During this phase, track and reduce non-PQ negotiation paths aggressively. Every persistent fallback is future downgrade debt.

Phase 3: Signature Surface Refactor (Parallel, Not Later)

Do not wait for perfect protocol ergonomics. Start redesigning certificate, identity, and artifact-signing flows now.

Expect painful details: larger signatures, modified chain handling, storage growth, packet sizing, and ecosystem coordination. This is where most timelines fail, so start here earlier than your instincts suggest.

Phase 4: Cutover Governance and Red-Team Validation

A migration is only complete when rollback and downgrade behavior are understood under adversarial conditions.

Run explicit exercises for:

- downgrade coercion at handshake boundaries

- mixed-fleet compatibility failures

- stale certificate and key material

- emergency re-issuance under outage pressure

Then establish a governance gate: what must be true before classical-only paths are forbidden in production.

Systems That Need Extra Attention

Some domains have less slack than others.

Cryptographic identity ecosystems (social identity layers, wallet ecosystems, supply-chain trust graphs) cannot rely on emergency migration after a breakthrough. If compromise can impersonate users irreversibly, migration must complete before the event, not after.

Long-lived encrypted archives face the “store now, decrypt later” risk profile. If data sensitivity outlives your migration window, classical key exchange today can become plaintext tomorrow.

TEE-centric designs are another weak spot. Hardware roots, attestation chains, and firmware trust anchors often have long replacement cycles and opaque vendor timelines. If these remain classical while your software stack migrates, you retain a hidden brittle core.

What to Stop Doing Immediately

To create execution bandwidth, kill the following behaviors now:

- launching new crypto-dependent protocols that are classical-only

- postponing PQ work until all libraries have perfect ergonomics

- treating hybrid mode as an end-state instead of a transition

- forcing security teams to justify migration with impossible certainty

The opportunity cost is too high. Every quarter spent debating whether this is “really urgent” is a quarter not spent closing inventory, compatibility, and operational gaps.

A Better Way to Communicate the Program

Executives and product owners often hear “post-quantum” as speculative R&D.

Translate it into business terms:

- confidentiality durability risk

- identity forgery and trust-chain risk

- compliance and contractual risk for long-retention data

- migration lead-time risk versus hardware uncertainty

When framed this way, the program is familiar: reduce irreversible downside before external timing uncertainty resolves.

The Bottom Line

The most important update from the current wave of research is not a guaranteed date. It is that the risk distribution moved enough that waiting for certainty is no longer a rational default.

If your roadmap still assumes a comfortable 2035+ window, treat this as a planning fault and correct it now.

Ship ML-KEM broadly. Start signature refactors immediately. Audit downgrade paths as if they are incidents waiting to happen. And run migration as a first-class reliability and security program, not a side quest.

Because if the timeline is wrong, you wasted effort on a hard but useful modernization.

If the timeline is right and you wait, you lose the option to migrate safely.

References

- Hacker News front page item: A cryptography engineer’s perspective on quantum computing timelines

- A Cryptography Engineer’s Perspective on Quantum Computing Timelines (Filippo Valsorda)

- Google’s timeline for PQC migration

- Safeguarding cryptocurrency by disclosing quantum vulnerabilities responsibly (Google Research)

- Shor’s algorithm is possible with as few as 10,000 reconfigurable atomic qubits (arXiv:2603.28627)

- FIPS 203: Module-Lattice-Based Key-Encapsulation Mechanism Standard (NIST)