Vibe Speccing: You're Vibe Coding Wrong, and Here's the Fix

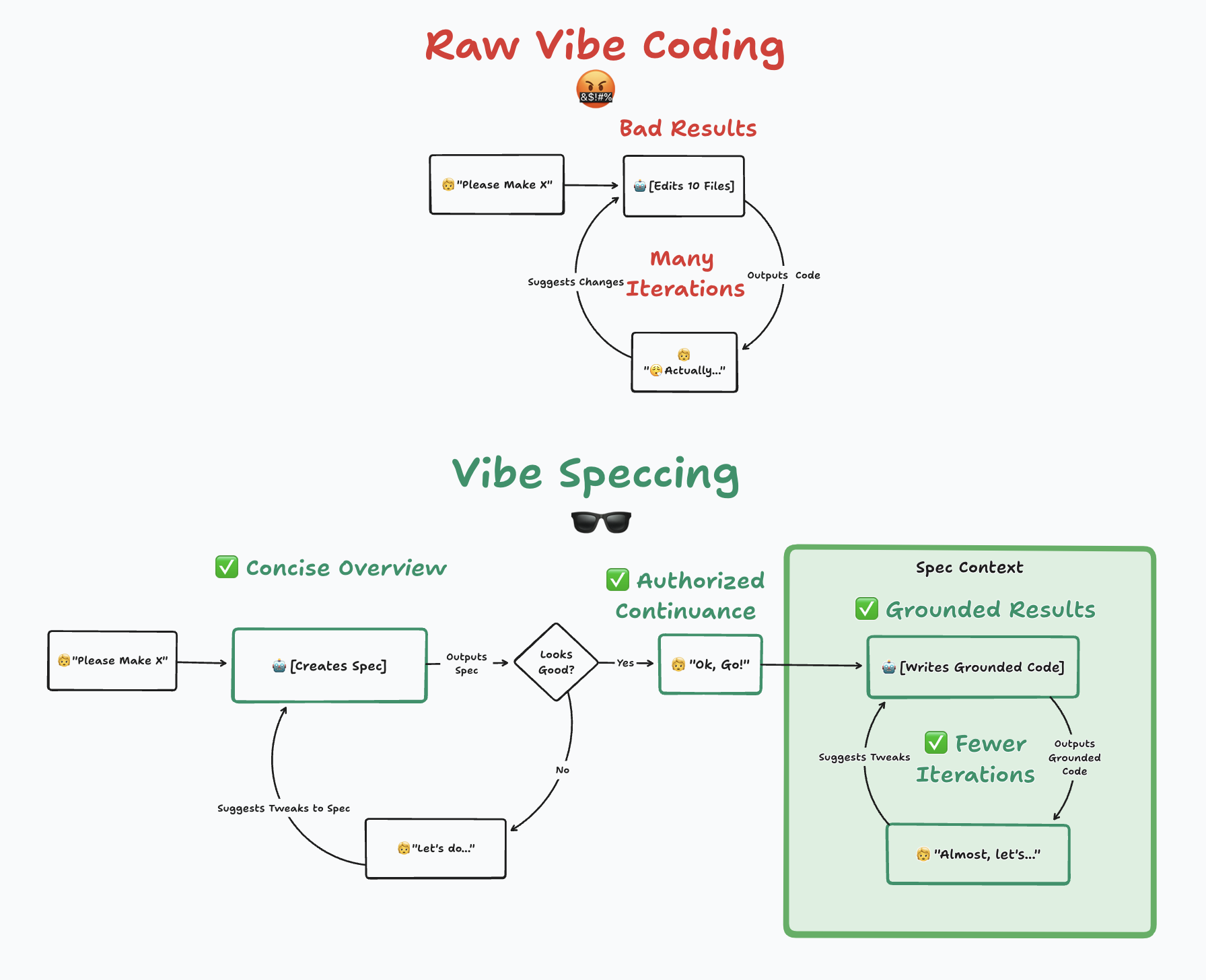

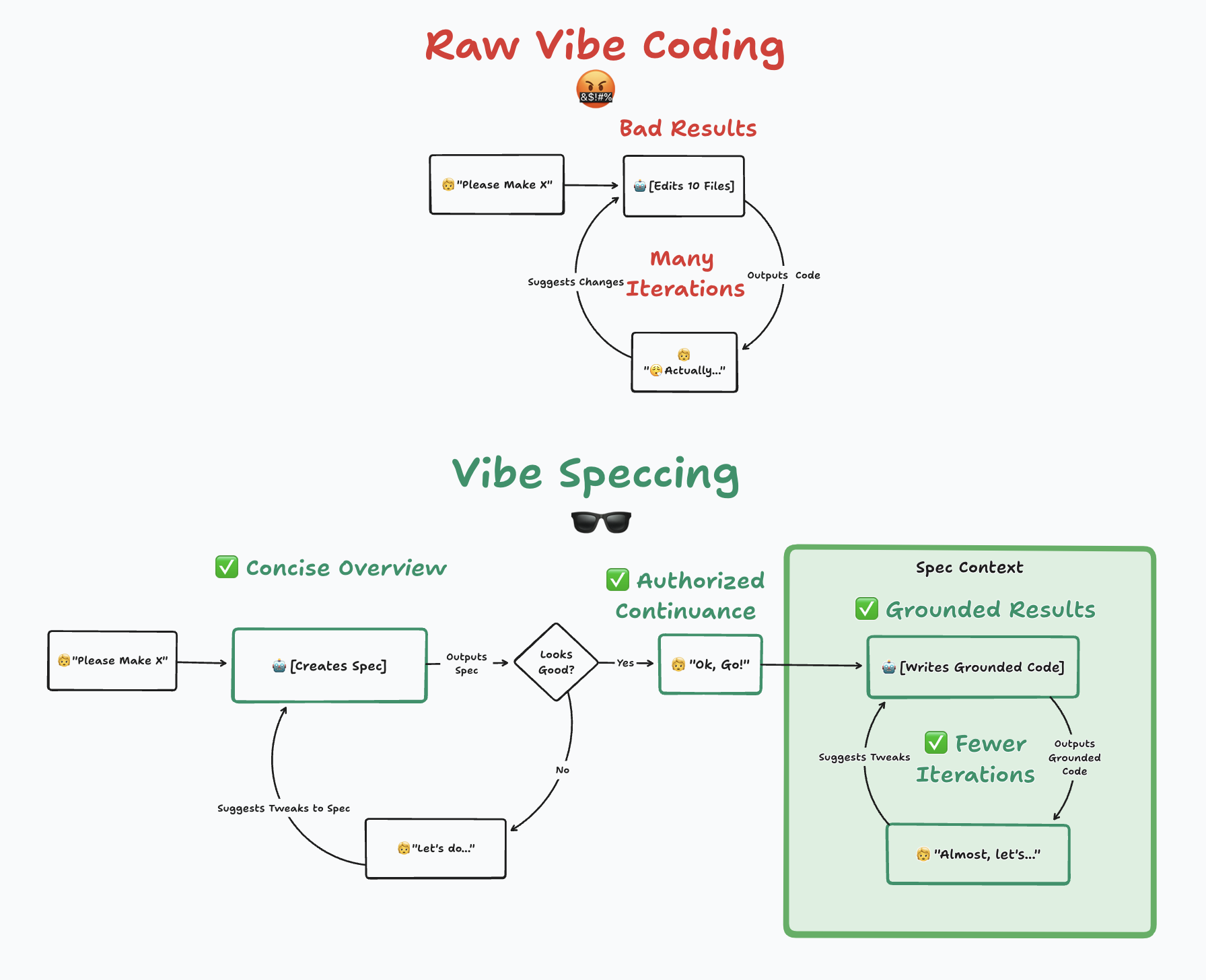

There’s a dirty secret in the vibe coding world that nobody wants to talk about: most of us are doing it wrong.

We open our AI-powered IDE, type something like “create a widget that handles user data”, and then watch in horror as the AI generates 49 files across six architectures, complete with fuzzy matching, caching layers, and analytics dashboards we never asked for. We spend the next three hours trying to untangle the mess, eventually give up, git checkout ., and pretend it never happened.

Sound familiar? I thought so.

The problem isn’t the AI. The problem is us. Or more precisely, the problem is that we’re skipping the single most important step in any development workflow—knowing what we actually want to build.

The Real Skill Isn’t Prompting. It’s Context.

Andrej Karpathy nailed it when he reframed “prompt engineering” as context engineering. The term is better because it captures what’s actually happening: you’re filling a context window with information, and the quality of that information determines the quality of the output.

Too little context and your LLM hallucinates features you didn’t want. Too much irrelevant context and you burn tokens while quality drops. The sweet spot is structured, dense, precisely calibrated information that tells the model exactly what matters.

This is where most vibe coders go wrong. They treat the AI like a mind reader instead of treating it like what it actually is: a very capable but very literal junior developer who needs clear instructions.

Think about it this way. If you hired a contractor to renovate your kitchen and said “make it nice”, you’d deserve whatever you got. But that’s exactly what we do with AI every single day.

The Fix: Make Your AI Write a Spec First

Here’s the thing that changed my workflow completely: don’t write the spec yourself. Make the AI write it for you.

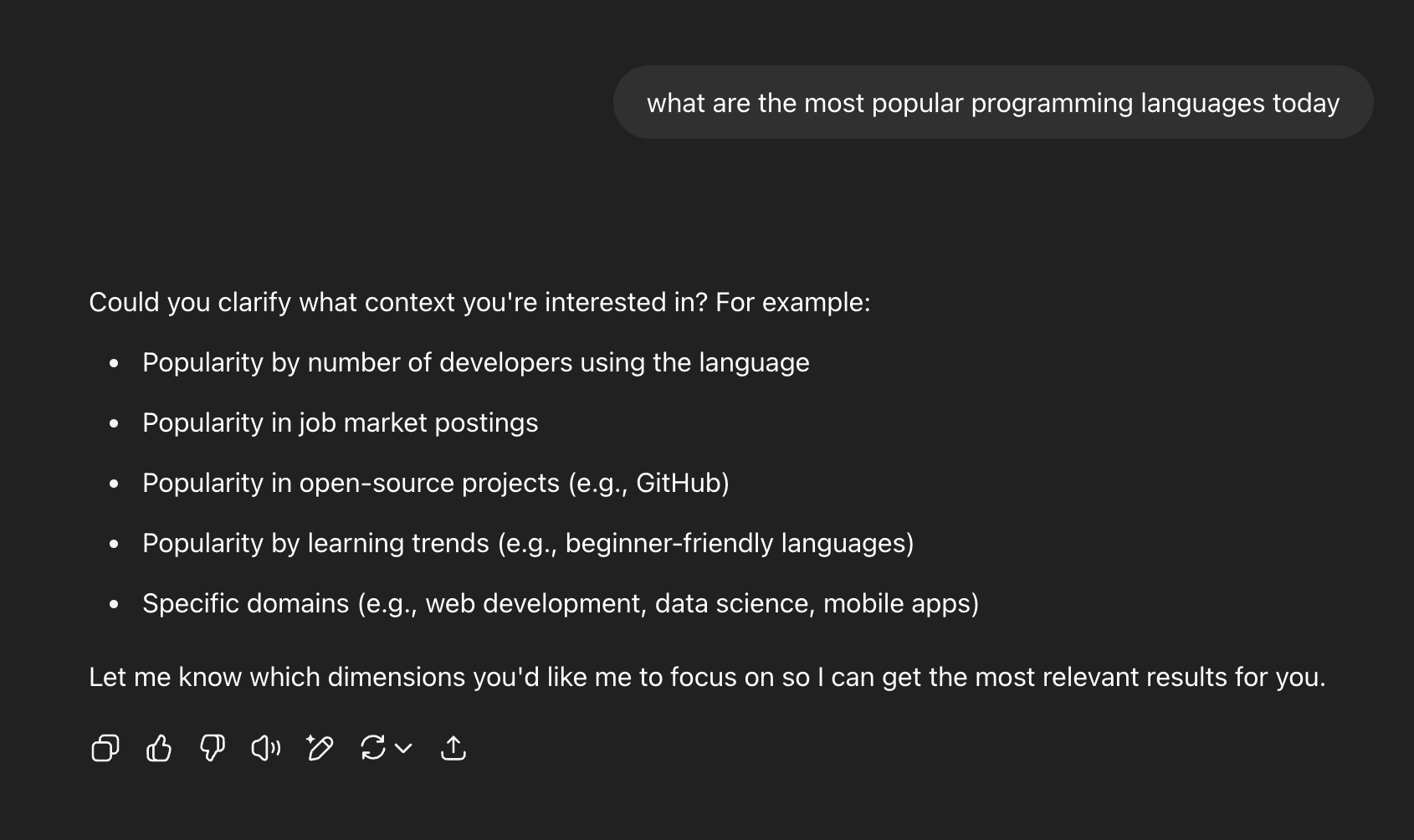

The trick is to set up your AI IDE rules so that before any coding begins, the AI automatically asks: “Should I create a spec for this task first?” Then it interviews you—asking about objectives, success criteria, constraints, scope, and what’s explicitly out of bounds. Five minutes of this structured conversation produces a requirements document that becomes the foundation for everything that follows.

The workflow looks like this:

- You describe what you want (even vaguely—that’s fine)

- The AI asks clarifying questions instead of immediately writing code

- A spec gets generated with clear scope, constraints, and success criteria

- You review and approve (or iterate until it’s right)

- Only then does code get written

The magic is in step 2. Instead of you trying to think of every edge case upfront, the AI surfaces questions you didn’t even know you needed to answer. What database are you using? Do you need pagination? What happens on error? Each answer tightens the scope and reduces the chance of getting code you don’t want.

What This Actually Looks Like in Practice

Let me show you the difference with a concrete example.

The Old Way (Vibe Coding Without a Spec)

You type: “Help me create an API route that handles search functionality.”

The AI immediately generates three files: a 45-line pages/api/search.js with full-text search, a 28-line utils/searchHelpers.js with fuzzy matching and ranking algorithms, and modifications to database.js adding a caching layer. It implements pagination, filters, result highlighting, and analytics tracking. None of which you asked for.

You try it. It doesn’t work because it assumed you had Elasticsearch when you’re running Postgres. You spend an hour trying to fix it, then give up.

The New Way (Vibe Speccing)

Same starting prompt: “Help me create an API route that handles search functionality.”

But this time the AI asks: What are users searching? Which fields? What matching behavior? What database? What are your performance requirements?

You answer: blog posts, title and content fields, case-insensitive partial matching, PostgreSQL, small blog so performance isn’t critical.

The AI writes a 24-line implementation with simple ILIKE queries that does exactly what you need. No fuzzy matching. No caching. No analytics. Just clean, working code that solves your actual problem.

That’s the difference. Give the AI a vague vibe, get vague vibe output. Give it a crisp spec, get crisp output.

Why This Works So Well

The spec-first approach solves at least seven real problems I’ve personally battled with:

Chat drift dies. Long exploratory conversations confuse LLMs. A spec is a stable, structured document that the AI can reference cleanly instead of trying to parse 47 messages of you changing your mind.

Projects become resumable. Ever abandon a side project because you lost context? With a spec committed to git, you can come back weeks later, hand it to a fresh AI session, and pick up exactly where you left off.

Scope creep gets killed. When the spec says “case-insensitive partial matching” and nothing about fuzzy search, the AI doesn’t add fuzzy search. Ambiguity is where feature creep breeds, and specs eliminate ambiguity.

Blank page paralysis vanishes. It’s psychologically easier to critique a draft than to create from scratch. Letting the AI write the first draft of requirements takes the pressure off the hardest part—figuring out what you actually want.

Collaboration becomes possible. Chat histories are personal and ephemeral. A spec can be shared with teammates, reviewed in PRs, and evolved through git history. Your AI-assisted work becomes a team sport.

Token efficiency improves. Dense structured specs give LLMs exactly what they need without the noise of exploratory back-and-forth. You spend fewer tokens and get better results.

Version control works again. Git can’t track AI conversations. But it can track spec files. You get full history of how your requirements evolved over time.

The Evidence Is Hard to Ignore

This isn’t just theory. Luke Bechtel, who popularized the Vibe Speccing concept, reports roughly a 60% reduction in feature development time after adopting this approach. Before specs, feature work took 2-3 hours of building the wrong thing. After specs, it’s 10-20 minutes of planning followed by about an hour of building the right thing.

The academic world is catching up too. Recent research from Dreossi et al. (2024) argues that specifications are “the missing link” in making LLM-based software development trustworthy. And industry players are validating the pattern: OpenAI’s Deep Research mode pauses to ask clarifying questions before spending compute, and Shopify’s AI features all start with comprehensive specs before any code is generated.

The pattern is everywhere once you see it: the best AI-assisted work starts with requirements, not code.

”But I Need to Move Fast!”

I hear this objection constantly. “I’m prototyping! I’m in a hackathon! I don’t have time for specs!”

Here’s my counter: you don’t have time NOT to write specs.

Five minutes of structured conversation with your AI saves hours of refactoring code that solves the wrong problem. Speed without direction isn’t velocity—it’s just expensive randomness. It doesn’t matter how quickly you can create something if it’s useless.

And for truly exploratory work? Write an “exploration spec.” Define what you’re trying to learn, set time bounds, establish what success looks like. Then explore freely within those constraints. After you’ve learned what you need, write a proper spec for the real implementation.

How to Get Started (5 Minutes)

The setup is dead simple:

- Add a rule to your AI IDE (Cursor, Windsurf, Claude, whatever you use) that tells the AI to always propose writing a spec before coding

- The rule should define three phases: spec creation (interview + document), review (iterate until approved), and implementation (code only after approval)

- Store specs in your project — something like

.cursor/scopes/FeatureName.mdfor committed specs, or a.local/subdirectory for throwaway experiments - Start your next task by typing what you want, then follow the AI through the spec process

- Say “GO!” when the spec looks right, and watch the AI build exactly what you described

That’s it. No complex tooling. No frameworks. Just a rule that says “ask before you build.”

The Bigger Picture

Here’s what I think is the most important takeaway: in the age of AI-assisted development, every developer becomes their own product manager. The hardest part of software engineering is no longer writing code—LLMs handle that increasingly well. The hardest part is knowing what code to write.

Vibe Speccing is the acknowledgment that we need to get better at the requirements side of the equation. The AI can write the code. But only you can define the problem. And if you don’t define it clearly, no amount of AI capability will save you from building the wrong thing very efficiently.

The future of AI-assisted development isn’t better code generation. It’s better requirement articulation.

LLM → Spec → Code. That’s the workflow. Try it once, and you’ll never go back to raw vibe coding again.